How Perplexity evaluates accuracy of intent suggestions?

TL;DR

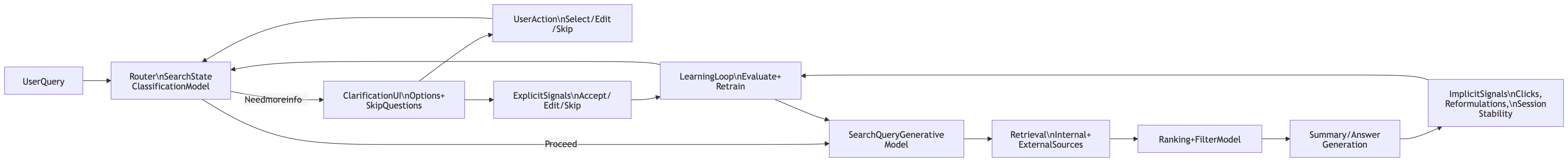

Perplexity’s Augmented Search Engine patent implies intent suggestions are evaluated like an ML plus product-metrics loop: a router predicts the next search phase (clarify vs search vs summarize), the UI captures explicit feedback (choose an option, edit, or “Skip Questions and Read The Answer”), and the system measures downstream success (did retrieval plus summary stabilize the session). For GEO, the practical play is to engineer content that reduces ambiguity early, pre-answers likely clarifications, and aligns with Perplexity’s Focus surfaces.

Introduction / Problem

Perplexity is not only ranking pages. In the patent, it runs a loop: receive a query, decide the next search phase, optionally ask for more user input, generate a search query, retrieve results, then generate a summary.

This is where intent suggestions matter. The UI can ask a clarifying question with multiple-choice options and also offers “Skip Questions and Read The Answer”.

The core problem: If an intent suggestion is wrong (wrong clarification, wrong mode, wrong rewrite), the session becomes noisy:

- more edits and reformulations,

- worse retrieval candidates,

- lower-quality summaries,

- weaker user satisfaction signals.

Solution

What the patent architecture implies about “intent accuracy”

1) Intent is routed, not guessed once

The router layer includes a Search State Classification Model, a User Prompt Generative Model, and a Search Query Generative Model.

So intent-suggestion accuracy is primarily: did the router pick the right next action and the right question or rewrite.

2) Feedback is captured explicitly

The intent-suggestion UI includes selectable options plus an explicit skip control, which acts as a strong negative signal when clarification was unnecessary or poorly targeted.

3) Accuracy is reinforced by downstream outcomes

The loop ends in retrieval plus summarization. This lets the system evaluate whether the intent suggestion improved the final outcome by observing user behavior after the suggestion, not only the suggestion itself.

4) The whole thing is designed to retrain

The patent describes a full ML lifecycle: data collection, feature engineering, training, model evaluation, prediction, validation/retraining, and deployment.

That strongly implies ongoing measurement of “intent suggestion accuracy” and iteration over time.

How it is solving (GEO takeaways you can apply)

Below are concrete GEO actions mapped to the patent’s router, UI feedback, and ranking stages.

1) Pre-answer the most likely clarifications

The patent UI example includes a clarifying question like “What type of vintage audio amplifier is it?” with choices such as “Tube” vs “Solid-State.”

GEO move: Add a short “Choose your case” block near the top of relevant pages:

- Option A vs Option B with 2–4 crisp differentiators

- “If you are not sure, here is how to identify it in 30 seconds”

- Then branch instructions by option

Why it helps: It reduces “clarification needed” events and should reduce skip behavior, aligning with the patent’s explicit skip pathway.

2) Optimize the first 200–300 words for routing confidence

The flow includes “determine next search phase,” then either request more input, perform search, or terminate with summary.

GEO move: Make the opening section classify the page fast:

- Persona: who it is for

- Outcome: what it helps achieve

- Constraints: price, geo, compatibility, timeframe

- Next step: checklist or decision path

Goal: Make the Search State Classification Model confident enough to proceed without extra clarification.

3) Align assets with Perplexity’s Focus surfaces

The patent UI shows a Focus selector: All, Academic, Writing, WolframAlpha, YouTube, Reddit.

GEO move: Publish intent-specific assets instead of one generic page:

- Academic-like: definitions, citations, methodology, limits

- How-to: step-by-step, troubleshooting, decision tree

- Community-style: FAQ, pitfalls, tradeoffs, real examples

Why it helps: You match the retrieval surface the user chose, improving “intent to source” alignment.

4) Win the candidate set via ranking and filtering

The patent includes an aggregation stage with a Ranking and Filter Model over internal and external retrieval.

GEO move: Add extraction-friendly anchors that survive filtering:

- Question-style section headers that mirror common follow-ups

- Conditional lists: “If X, do Y”

- Concrete thresholds and examples

- A tight summary that can be lifted into an answer with minimal rewriting

5) Track GEO metrics that mirror Perplexity’s intent-accuracy loop

You can measure your own performance using session-level proxies that align with the patent’s loop.

Suggested KPI set (your internal metrics):

- Clarification Capture Rate (CCR)

CCR = sessions_where_page_answers_top_followups / total_AI_sessions- Intent Branch Coverage (IBC)

IBC = number_of_supported_variants / number_of_common_variants_observed- Session Stabilization Rate (SSR)

SSR = sessions_with_no_reformulation_within_window / total_AI_sessionsThese map cleanly to the patent’s request → clarify → retrieve → summarize sequence.